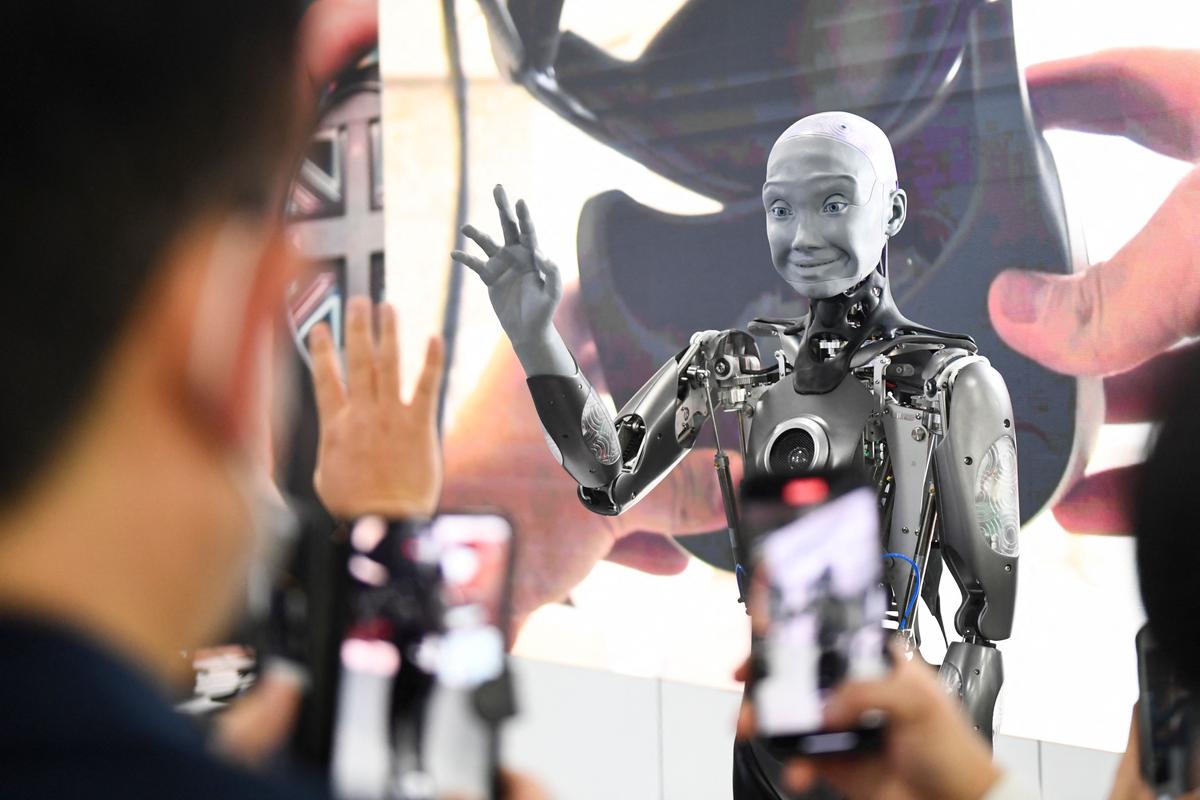

Google has unveiled its latest artificial intelligence model, paving the way for the development of sentient robots as seen only in the realm of science fiction.

The Robotic Transformer 2 (RT-2) is trained on both web and robotics data, having the capability of translating this knowledge into generalized instructions for robotic control, according to a July 28 report by Google DeepMind.