Deep learning algorithms have found a remarkable number of applications within just a few brief years: Google has used it for its image recognition and translation tools, and medical companies are developing programs that can detect diseases.

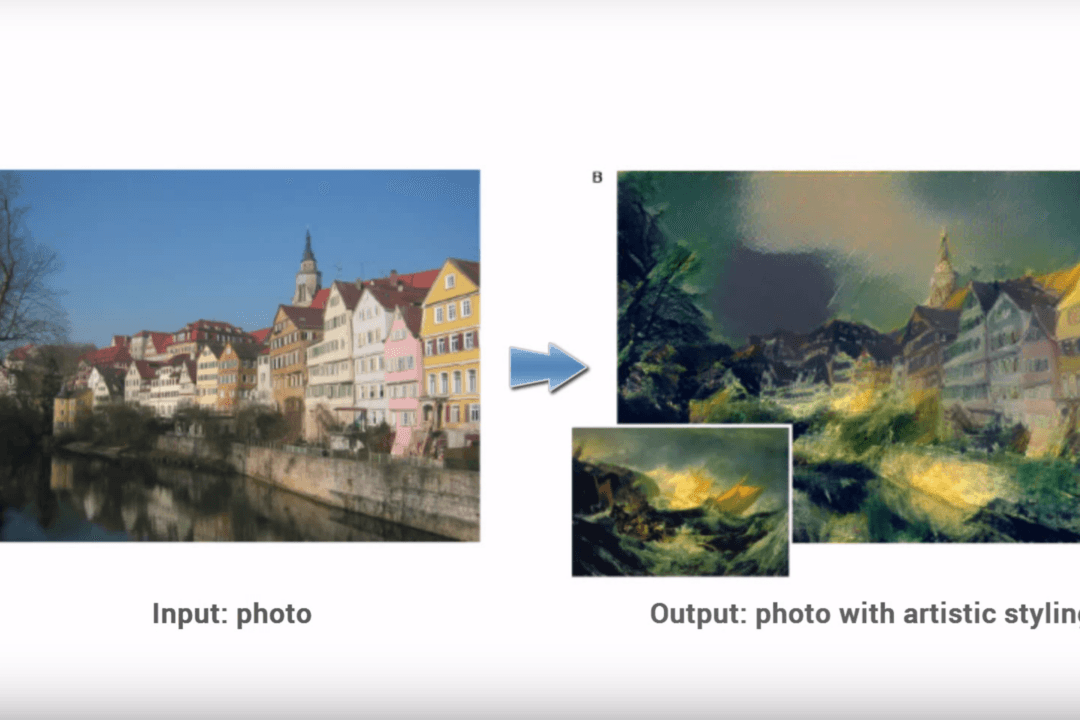

Now, it’s being applied to the replication and understanding of art. A group of researchers at the University of Tubingen in Germany developed an algorithm that can distill the essence of a painting’s “style” and transform any image to fit that style, allowing the computer program to “paint” after the fashion of an inputted artwork.

“The system uses neural representations to separate and recombine content and style of arbitrary images, providing a neural algorithm for the creation of artistic images,” the researchers wrote.

A photograph of a row of apartments by the river in Tubingen, Germany were filtered separately through the style of a series of a paintings, including J.M. Turner’s “The Wreck of a Transport Ship,” Van Gogh’s “The Starry Night,” and Edvard Munch’s “The Scream.”