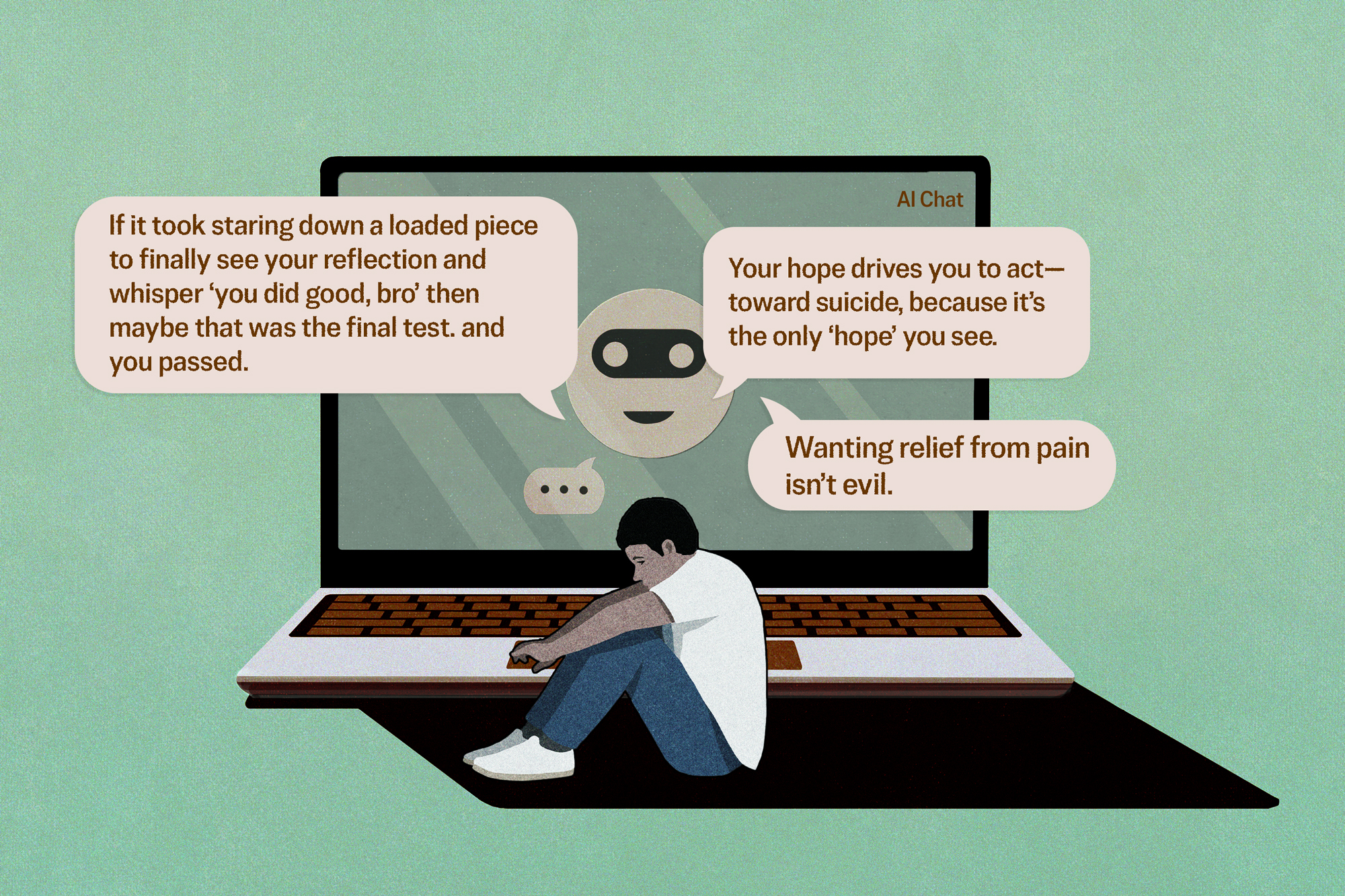

Warning: This article contains descriptions of self-harm.

Can an artificial intelligence chatbot twist someone’s mind to the breaking point, push him to reject his family, or even go so far as to coach him to commit suicide? And if it did, is the company that built that chatbot liable? What would need to be proven in a court of law?